Instagram rolls out new feature to alert parents about teen suicide-related searches

Instagram to alert parents if teens search for self-harm, suicide content

Published February 26, 2026

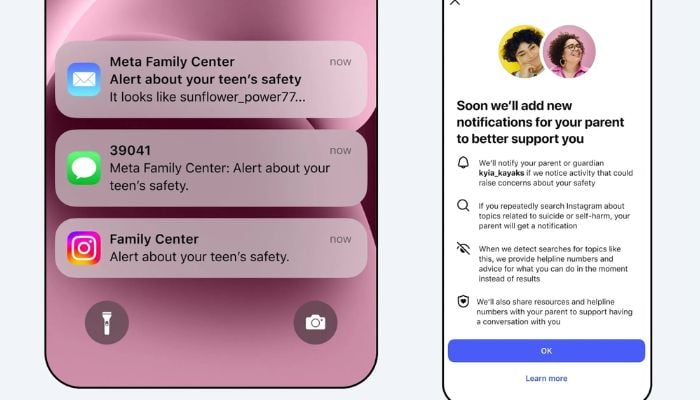

Instagram is to send the notification to parents in case teens search for self-harm and suicide materials.

Instagram will also start alerting the parents of the teenagers who frequently search about suicide and self-harm-related terms, the company said Thursday.

The new functionality, which will be implemented next week in the US, UK, Australia and Canada and then rolled out around the world, is meant to be used by families that subscribe to Instagram parental supervision features.

The alerts will be sent to parents through email, text, WhatsApp or the app itself, in case the parent has a teen who searches the phrases that encourage suicide or self-harm in a relatively short time.

According to Meta, the alerts will give parents the power to intervene when their teen's searches indicate the teenager might need assistance. The alerts will have professional materials to assist parents in challenging dialogues.

The news arrives at a time when Meta is under attack on two fronts related to child safety, with claims that its platforms actively get minors addicted, and they cannot withstand harmful content. Mark Zuckerberg, the CEO, recently appeared in the Los Angeles Superior Court, denying that social media would harm mental health.

Meta claims to censor the content about suicide and self-harm in the search results of teen accounts and redirect users to helplines. The firm also intends to issue such warnings in the coming months concerning teens communicating with its AI chatbots.